LLM Validity via Enhanced Conformal Prediction: Conditional Guarantees, Score Learning, and Response-Level Calibration Under Dependent Claims

Finite-sample validity guarantees for large language model (LLM) outputs are attractive because they are post-hoc and model-agnostic, but they are fragile when prompts are heterogeneous and factuality signals are noisy.

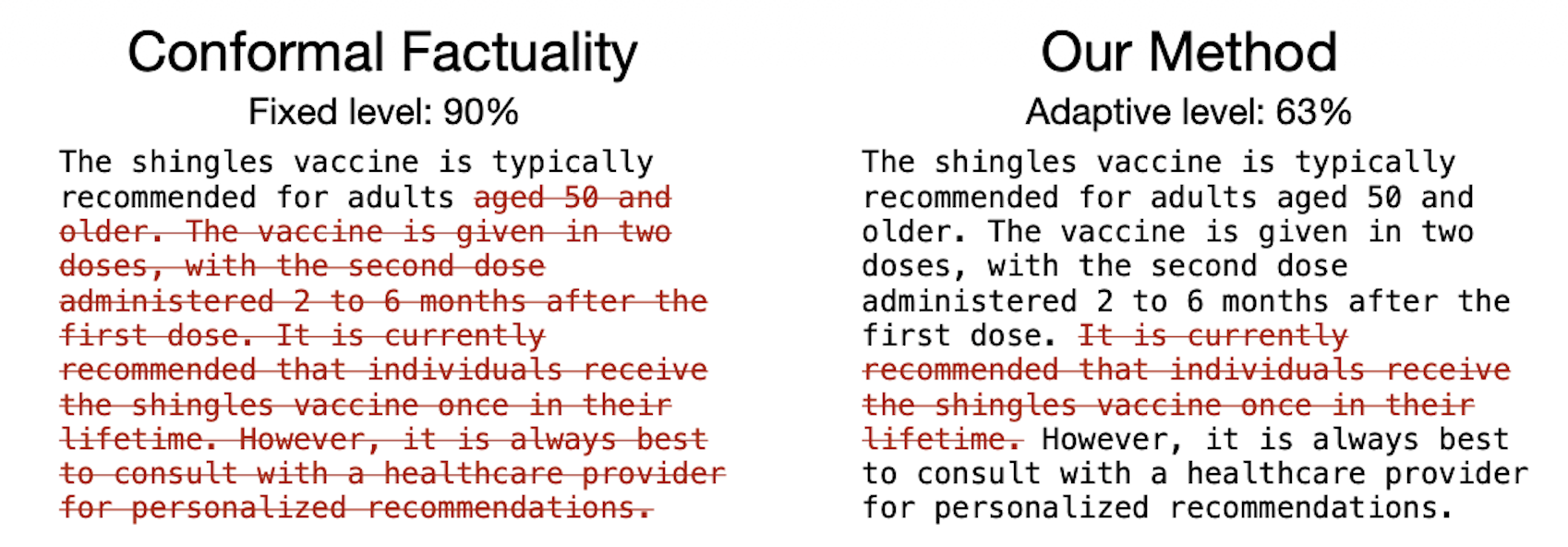

Cherian et al. (2024) propose enhanced conformal methods for factuality filtering that (i) replace marginal guarantees with function-class conditional guarantees and (ii) improve utility via level-adaptive calibration and conditional boosting that differentiates through conditional conformal cutoffs.

This discussion paper reviews the conformal prediction and LLM factuality context, presents the selected paper and proposes a future direction: response-level conformalization under dependent claims. The key idea is to treat an entire response as the exchangeable unit, use blocked calibration that keeps all claims from a response together, and calibrate response-level tail losses conditionally on prompt/response features to align guarantees with user-facing risk when claim errors are dependent.

Notes:

- This work is a discussion paper of Cherian et al. (2024).

- The authors released a filtered

MedLFQAbenchmark with non-health-related prompts removed, as well as the generated/parsed text and experiment notebooks. - Their conditional conformal inference Python package now supports level-adaptive conformal prediction.